This post is a continuation of the story which began here.

Life for the teenager Computer Science was not entirely lonely, since he had several half-brothers, half-nephews, and lots of cousins, although he was the only one still living at home. In fact, his family would have required a William Faulkner or a Patrick White to do it justice.

The oldest of Mathematics’ children was Geometry, who CS did not know well because he did not visit very often. When he did visit, G would always bring a sketchpad and make drawings, while the others talked around him. What the boy had heard was that G had been very successful early in his life, with a high-powered job to do with astronomy at someplace like NASA and with lots of people working for him, and with business trips to Egypt and Greece and China and places. But then he’d had an illness or a nervous breakdown, and thought he was traveling through the fourth dimension. CS had once overheard Maths telling someone that G had an “identity crisis“, and could not see the point of life anymore, and he had become an alcoholic. He didn’t speak much to the rest of the family, except for Algebra, although all of them still seemed very fond of him, perhaps because he was the oldest brother.

Continue reading ‘Computer science, love-child: Part 2’

Archive for the ‘Computer technology’ Category

Page 4 of 5

Computer Science, love-child

With the history and pioneers of computing in the British news this week, I’ve been thinking about a common misconception: many people regard computer science as very closely related to Mathematics, perhaps even a sub-branch of Mathematics. Mathematicians and physical scientists, who often know little and that little often outdated about modern computer science and software engineering, are among the worst offenders here. For some reason, they often think that computer science consists of Fortran programming and the study of algorithms, which has been a long way from the truth for, oh, the last few decades. (I have past personal experience of the online vitriol which ignorant pure mathematicians can unleash on those who dare to suggest that computer science might involve the application of ideas from philosophy, economics, sociology or ecology.)

So here’s my story: Computer Science is the love-child of Pure Mathematics and Philosophy.

Continue reading ‘Computer Science, love-child’

Alan Turing

Yesterday, I reported on the restoration of the world’s oldest, still-working modern computer. Last night, British Prime Minister Gordon Brown apologized for the country’s treatment of Alan Turing, computer pioneer. In the words of Brown’s statement:

Turing was a quite brilliant mathematician, most famous for his work on breaking the German Enigma codes. It is no exaggeration to say that, without his outstanding contribution, the history of World War Two could well have been very different. He truly was one of those individuals we can point to whose unique contribution helped to turn the tide of war. The debt of gratitude he is owed makes it all the more horrifying, therefore, that he was treated so inhumanely. In 1952, he was convicted of ‘gross indecency’ – in effect, tried for being gay. His sentence – and he was faced with the miserable choice of this or prison – was chemical castration by a series of injections of female hormones. He took his own life just two years later.”

It might be considered that this apology required no courage of Brown.

This is not the case. Until very recently, and perhaps still today, there were people who disparaged and belittled Turing’s contribution to computer science and computer engineering. The conventional academic wisdom is that he was only good at the abstract theory and at the formal mathematizing (as in his “schoolboy essay” proposing a test to distinguish human from machine interlocuters), and not good for anything practical. This belief is false. As the philosopher and historian B. Jack Copeland has shown, Turing was actively and intimately involved in the design and construction work (mechanical & electrical) of creating the machines developed at Bletchley Park during WWII, the computing machines which enabled Britain to crack the communications codes used by the Germans.

Perhaps, like myself, you imagine this revision to conventional wisdom would be uncontroversial. Sadly, not. On 5 June 2004, I attended a symposium in Cottonopolis to commemorate the 50th anniversary of Turing’s death. At this symposium, Copeland played a recording of an oral-history interview with engineer Tom Kilburn (1921-2001), first head of the first Department of Computer Science in Britain (at the University of Manchester), and also one of the pioneers of modern computing. Kilburn and Turing had worked together in Manchester after WW II. The audience heard Kilburn stress to his interviewer that what he learnt from Turing about the design and creation of computers was all high-level (ie, abstract) and not very much, indeed only about 30 minutes worth of conversation. Copeland then produced evidence (from signing-in books) that Kilburn had attended a restricted, invitation-only, multi-week, full-time course on the design and engineering of computers which Turing had presented at the National Physical Laboratories shortly after the end of WW II, a course organized by the British Ministry of Defence to share some of the learnings of the Bletchley Park people in designing, building and operating computers. If Turing had so little of practical relevance to contribute to Kilburn’s work, why then, one wonders, would Kilburn have turned up each day to his course.

That these issues were still fresh in the minds of some people was shown by the Q&A session at the end of Copeland’s presentation. Several elderly members of the audience, clearly supporters of Kilburn, took strident and emotive issue with Copeland’s argument, with one of them even claiming that Turing had contributed nothing to the development of computing. I repeat: this took place in Manchester 50 years after Turing’s death! Clearly there were people who did not like Turing, or in some way had been offended by him, and who were still extremely upset about it half a century later. They were still trying to belittle his contribution and his practical skills, despite the factual evidence to the contrary.

I applaud Gordon Brown’s courage in officially apologizing to Alan Turing, an apology which at least ensures the historical record is set straight for what our modern society owes this man.

POSTSCRIPT #1 (2009-10-01): The year 2012 will be a centenary year of celebration of Alan Turing.

POSTSCRIPT #2 (2011-11-18): It should also be noted, concerning Mr Brown’s statement, that Turing died from eating an apple laced with cyanide. He was apparently in the habit of eating an apple each day. These two facts are not, by themselves, sufficient evidence to support a claim that he took his own life.

POSTSCRIPT #3 (2013-02-15): I am not the only person to have questioned the coroner’s official verdict that Turing committed suicide. The BBC reports that Jack Copeland notes that the police never actually tested the apple found beside Turing’s body for traces of cyanide, so it is quite possible it had no traces. The possibility remains that he died from an accidental inhalation of cyanide or that he was deliberately poisoned. Given the evidence, the only rational verdict is an open one.

Switch WITCH

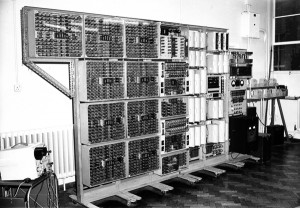

The Guardian today carries a story about an effort at the UK National Musem of Computing at Bletchley Park to install and restore the world’s oldest working modern electric computer, the Harwell Dekatron Computer (aka the WITCH, pictured here), built originally for the UK Atomic Energy Research Establishment at Harwell in 1951. The restoration is being done by UK Computer Conservation Society.

Note: The Guardian claims this to be the world’s oldest working computer. I am sure there are older “computers” still working elsewhere, if we assume a computer is a programmable device. At late as 1985, in Harare, I saw at work in factories programmable textile and brush-making machinery which had been built in Britain more than a century earlier.

“One of the things that attracted us to the project was that it was built from standard off-the-shelf Post Office components, of which we have a stock built up for Colossus,” says Frazer. “And we have some former Post Office engineers who can do that sort of wiring.”

Frazer says he can imagine the machine’s three designers – Ted Cooke-Yarborough, Dick Barnes and Gurney Thomas – going to the stores with a list and saying: “We’d like these to build a computer, please.”

Dick Barnes, now a sprightly 88, says: “We had to build [the machine] from our existing resources or we might not have been allowed to build it at all. The relay controls came about because that was my background: during the war I had produced single-purpose calculating devices using relays. We knew it wasn’t going to be a fast computer, but it was designed to fulfil a real need at a time when the sole computing resources were hand-turned desk calculators.”

Computing-as-interaction

In its brief history, computer science has enjoyed several different metaphors for the notion of computation. From the time of Charles Babbage in the nineteenth century until the mid-1960s, most people thought of computation as calculation, or the manipulation of numbers. Indeed, the English word “computer” was originally used to describe a person undertaking arithmetical calculations. With widespread digital storage and processing of non-numerical information from the 1960s onwards, computation was re-conceptualized more generally as information processing, or the manipulation of numerical-, text-, audio- or video-data. This metaphor is probably still the prevailing view among people who are not computer scientists. From the late 1970s, with the development of various forms of machine intelligence, such as expert systems, a yet more general metaphor of computation as cognition, or the manipulation of ideas, became widespread, at least among computer scientists. The fruits of this metaphor have been realized, for example, in the advanced artificial intelligence technologies which have now been a standard part of desktop computer operating systems since the mid-1990s. Windows95, for example, included a Bayesnet for automated diagnosis of printer faults.

With the growth of the Internet and the Web over the last two decades, we have reached a position where a new metaphor for computation is required: computation as interaction, or the joint manipulation of ideas and actions. In this metaphor, computation is something which happens by and through the communications which computational entities have with one another. Cognition and intelligent behaviour is not something which a computer does on its own, or not merely that, but is something which arises through its interactions with other intelligent computers to which is connected. The network is the computer, in SUN’s famous phrase. This viewpoint is a radical reconceptualization of the notion of computation.

In this new metaphor, computation is an activity which is inherently social, rather than solitary, and this view leads to a new ways of conceiving, designing, developing and managing computational systems. One example of the influence of this viewpoint, is the model of software as a service, for example in Service Oriented Architectures. In this model, applications are no longer “compiled together” in order to function on one machine (single user applications), or distributed applications managed by a single organization (such as most of today’s Intranet applications), but instead are societies of components:

- These components are viewed as providing services to one another rather than being compiled together. They may not all have been designed together or even by the same software development team; they may be created, operate and de-commissioned according to different timescales; they may enter and leave different societies at different times and for different reasons; and they may form coalitions or virtual organizations with one another to achieve particular temporary objectives. Examples are automated procurement systems comprising all the companies connected along a supply chain, or service creation and service delivery platforms for dynamic provision of value-added telecommunications services.

- The components and their services may be owned and managed by different organizations, and thus have access to different information sources, have different objectives, have conflicting preferences, and be subject to different policies or regulations regarding information collection, storage and dissemination. Health care management systems spanning multiple hospitals or automated resource allocation systems, such as Grid systems, are examples here.

- The components are not necessarily activated by human users but may also carry out actions in an automated and co-ordinated manner when certain conditions hold true. These pre-conditions may themselves be distributed across components, so that action by one component requires prior co-ordination and agreement with other components. Simple multi-party database commit protocols are examples of this, but significantly more complex co-ordination and negotiation protocols have been studied and deployed, for example in utility computing systems and in ad hoc wireless networks.

- Intelligent, automated components may even undertake self-assembly of software and systems, to enable adaptation or response to changing external or internal circumstances. An example is the creation of on-the-fly coalitions in automated supply-chain systems in order to exploit dynamic commercial opportunities. Such systems resemble those of the natural world and human societies much more than they do the example arithmetical calculations programs typically taught in Fortran classes, and so ideas from biology, ecology, statistical physics, sociology, and economics play an increasingly important role in computer science.

How should we exploit this new metaphor of computation as a social activity, as interaction between intelligent and independent entities, adapting and co-evolving with one another? The answer, many people believe, lies with agent technologies. An agent is a computer programme capable of flexible and autonomous action in a dynamic environment, usually an environment containing other agents. In this abstraction, we have software entities called agents, encapsulated, autonomous and intelligent, and we have demarcated the society in which they operate, a multi-agent system. Agent-based computing concerns the theoretical and practical working through of the details of this simple two-level abstraction.

Reference:

Text edited slightly from the Executive Summary of:

M. Luck, P. McBurney, S. Willmott and O. Shehory [2005]: The AgentLink III Agent Technology Roadmap. AgentLink III, the European Co-ordination Action for Agent-Based Computing, Southampton, UK.

Action-at-a-distance

For at least 22 years, I have heard business presentations (ie, not just technical presentations) given by IT companies which mention client-server architectures. For the last 17 of those years, this is not suprising, since both the Hyper-Text Transfer Protocol (HTTP) and the World-Wide Web (WWW) use this architecture. In a client-server architecture, one machine (the client) requests that some action be taken by another machine (the server), which responds to the request. For HTTP, the standard request by the client is for the server to send to the client some electronic file, such as a web-page. The response by the server is not necessarily to undertake the action requested. Indeed, the specifications of HTTP define 41 responses (so-called status codes), including outright refusal by the server (Client Error 403 “Forbidden”), and allow for hundreds more to be defined. Typically, one server will be configured to respond to many simultaneous or near-simultaneous client requests. The functions of client and server are conceptually quite distinct, although of course, one machine may undertake both functions, and a server may even have to make a request as a client to another server in order to respond to an earlier request from its clients. As an analogy, consider a library which acts like a server of books to its readers, who are its clients; a library may have to request a book via inter-library loan from another library in order to satisfy a reader’s request.

Since the rise of file sharing, particularly illegal file sharing, over a decade ago, it has also been common to hear talk about Peer-to-Peer (P2P) architectures. Conceptually, in these architectures all machines are viewed equally, and none are especially distinguished as servers. Here, there is no central library of books; rather, each reader him or herself owns some books and is willing to lend them to any other reader as and when needed. Originally, peer-to-peer architectures were invented to circumvent laws on copyright, but they turn out (as do most technical innovations) to have other, more legal, uses – such as the distributed storage and sharing of electronic documents in large organizations (eg, xray images in networks of medical clinics).

Both client-server and P2P architectures involve attempts at remote control. A client or a peer-machine makes a request of another machine (a server or another peer, respectively), to undertake some action(s) at the location of the second machine. The second machine receiving the request from the first may or may not execute the request. This has led me to think about models of such action-at-a-distance.

Imagine we have two agents (human or software), named A and B, at different locations, and a resource, named X, at the same location as B. For example, X could be an electron microscope, B the local technician at site of the microscope, and A a remote user of the microscope. Suppose further that agent B can take actions directly to control resource X. Agent A may or may not have permissions or powers to act on X.

Then, we have the following five possible situations:

1. Agent A controls X directly, without agent B’s involvement (ie, A has remote access to and remote control over resource X).

2. Agent A commands agent B to control X (ie, A and B have a master-slave relationship; some client-server relationships would fall into this category).

3. Agent A requests agent B to control X (ie, both A and B are autonomous agents; P2P would be in this category, as well as many client-server interactions).

4. Both agent A and agent B need to take actions jointly to control X (eg, the double-key system for launch of nuclear missiles in most nuclear-armed forces; coalitions of agents would be in this category)

5. Agent A has no powers, not direct nor indirect, to control resource X.

As far as I can tell, these five situations exhaust the possible relationships betwen agents A and B acting on resource X, at least for those cases where potential actions on X are initated by agent A. From this outline, we can see the relevance of much that is now being studied in computer science:

- Action co-ordination (Cases 1-5)

- Command dialogs (Case 2)

- Persuasion dialogs (Case 3)

- Negotiation dialogs (dialogs to divide a scarce resource) (Case 4)

- Deliberation dialogs (dialogs over what actions to take) (Cases 1-4)

- Coalitions (Case 4).

To the best of my knowledge, there is as yet no formal theory which encompasses these five cases. (I welcome any suggestions or comments to the contrary.) Such a formal theory is needed as we move beyond Web 2.0 (the web as means to create and sustain social networks) to reification of the idea of computing-as-interaction (the web as a means to co-ordinate joint actions).

Reference:

Network Working Group [1999]: Hypertext Transfer Protocol – HTTP/1.1. Technical Report RFC 2616. Internet Engineering Task Force.

Computers in conflict

Academic publishers Springer have just released a new book on Argumentation in Artificial Intelligence. From the blurb:

This volume is a systematic, expansive presentation of the major achievements in the intersection between two fields of inquiry: Argumentation Theory and Artificial Intelligence. Contributions from international researchers who have helped shape this dynamic area offer a progressive development of intuitions, ideas and techniques, from philosophical backgrounds, to abstract argument systems, to computing arguments, to the appearance of applications producing innovative results. Each chapter features extensive examples to ensure that readers develop the right intuitions before they move from one topic to another.

In particular, the book exhibits an overview of key concepts in Argumentation Theory and of formal models of Argumentation in AI. After laying a strong foundation by covering the fundamentals of argumentation and formal argument modeling, the book expands its focus to more specialized topics, such as algorithmic issues, argumentation in multi-agent systems, and strategic aspects of argumentation. Finally, as a coda, the book explores some practical applications of argumentation in AI and applications of AI in argumentation.”

References:

Previous posts on argumentation can be found here.

Iyad Rahwan and Guillermo R. Simari (Editors) [2009]: Argumentation in Artificial Intelligence. Berlin, Germa ny Springer.

The future is not what it was

The Internet, as imagined in 1969, complete with free sexism. (Hat tip: SW).

The undead

COBOL turns 50 this year, but still has the energy and enthusiasm of a someone much younger. Perhaps 50 is the new 30, or even the new 14!

But are companies really relying on a half-century-old invention to handle large chunks of their dealings? Mike Madden, development service manager with the catalogue-shopping firm JD Williams, believes so.

Better known for its online stores, such as Simply Be and Fifty Plus, Madden says JD Williams remains highly dependent on Cobol applications. “We have a huge commitment to Cobol,” he says. “About 50% of our mainframe systems use it.”

Why? “Simple – we haven’t found anything faster than Cobol for batch-processing,” Madden says. “We use other languages, such as Java, for customer-facing websites, but Cobol is for order processing. The code matches business logic, unlike other languages.”

Brand immortality

Catching up with films I missed when they first appeared, I have just watched that action-spy thriller of the almost-over Cold War, Little Nikita, which first appeared in 1988.

The story was fairly predictable, and the most exciting moment for me occurred at minute 77, when two of the protagonists, trying to flee San Diego for Mexico, turned a corner on which was located a NYNEX Business Center. These were a nationwide chain of 80 retail computer hardware and services outlets most of which NYNEX bought from IBM in 1986 (according to rumour, after a handshake over an inter-CEO game of golf), and then sold in 1991 to Computerland. When NYNEX owned them, they comprised the third-largest non-franchise network of retail computer outlets in the US.

One of the seven Baby Bells (aka RBOCs) created by the break up of the Bell System in 1984, NYNEX was the only one to pursue an adult career as an IT services company, at one point earning sufficient revenues from software and related services to be placed in the Top-10 largest US software companies. For all the synergies, however, telecommunications and software development are sufficiently different businesses, and/or NYNEX senior managers cared insufficiently for these differences, that NYNEX never appeared to take seriously their role as a software company. Having cured itself of its untypical desire to be a leading software house by re-selling most of its purchases in this sector, NYNEX, a few mergers later, has now become Verizon.

It is nice to think that, in centuries to come, the NYNEX Business Centers brand will live on in the moving pictures.